The End of the Pipeline: Why Native-Audio LLMs Are Killing the STT→LLM→TTS Stack

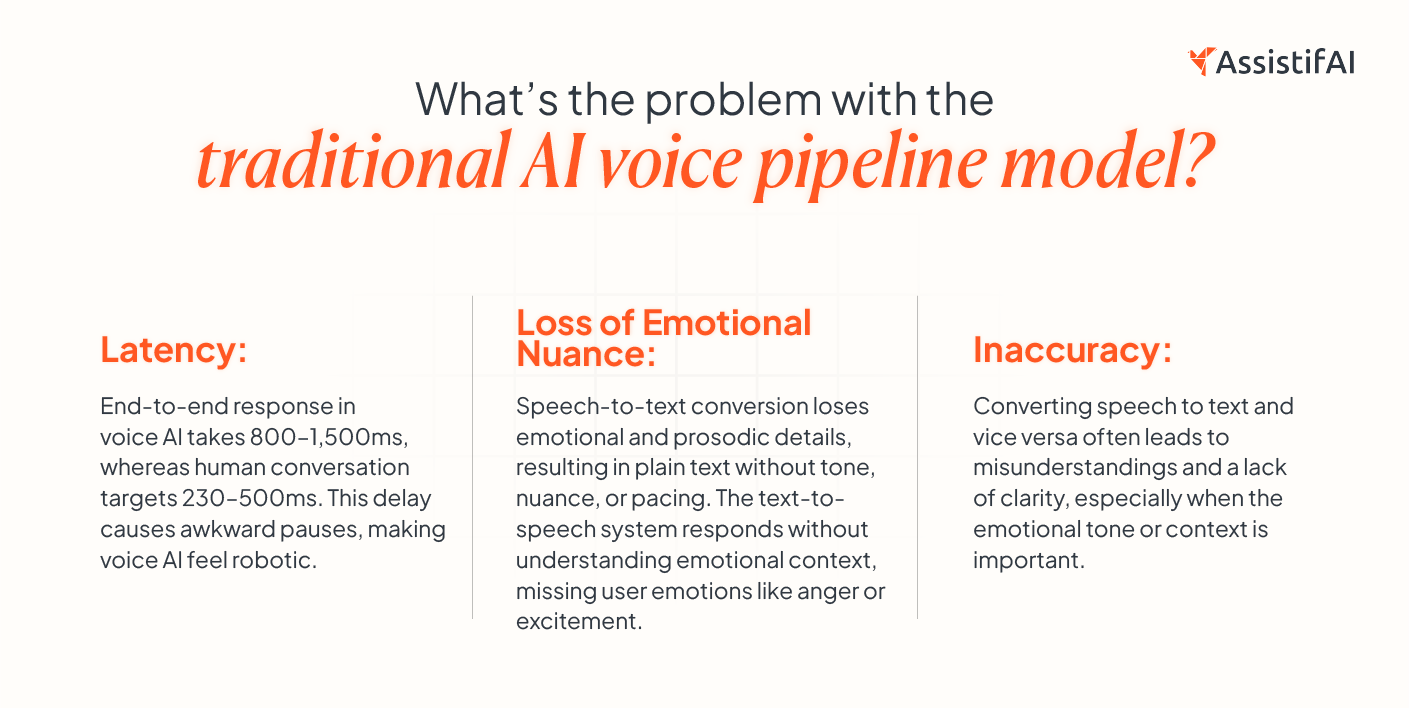

Why do traditional AI voice models sound robotic? Because they rely on a chain of three disconnected models—STT, LLM, and TTS. These are used to convert speech into actionable data and back to speech.

In a traditional AI voice pipeline, the first stage, or STT, guesses the words, the LLM responds to the text, and the TTS model rebuilds speech from scratch.

And what do we get? An emotionless and lifeless output.

Every second a customer waits for your voice agent to respond, trust drains. Humans expect responses within 300–500 milliseconds.

When AI voice agents exceed this threshold, conversations feel robotic, leading to increased abandonment rates and damaged customer trust.

There is a solution for these concerns. Native Audio LLM architecture collapses this stack and processes raw audio directly.

But why does this matter? Let’s see.

TL;DR: The End of the Pipeline

- Problem: Traditional voice assistants experience latency and loss of emotional nuance due to their multi-step pipeline, which includes speech-to-text (STT), LLM processing, and text-to-speech (TTS).

- Shift: Native-audio LLMs don’t follow the intermediate steps. Instead, they process audio directly, keeping raw information and emotional nuance.

- Fix: The new AI voice model simplifies voice interaction by approaching human conversational timing of ~230 ms, providing a smoother, more natural user experience.

Why does the Traditional AI voice pipeline slow down real conversations?

Every handoff adds delay. Every delay breaks the call.

The traditional voice assistant’s STT→LLM→TTS pipeline is a three-stage voice AI architecture in which a speech-to-text model converts audio to text, a language model generates a text response, and a text-to-speech model converts that response back to audio. Each stage works separately and passes output to the next.

Why Native-Audio LLM is a Game-Changer?

First of all, Audio LLM architecture treats audio as a first-class input format to be converted into text. Audio is tokenized and compressed into discrete representations that capture both content and acoustic characteristics, and the model processes those tokens directly.

How does this new model work?

One system. No handoffs. No broken context.

End-to-end audio models take speech directly as an audio signal, so they don't need to turn it into text first.

This allows the model to:

- Analyze Prosody and Emotion: The model can detect minute changes in tone, pitch, and pace that are absent in the traditional pipeline.

- Increase Speed and Efficiency: Without text-conversion delays, the audio LLM architecture generates responses faster, reducing latency to near-real-time levels.

- Improve Natural Interactions: Conversational AI produces smoother, more natural conversations by directly processing audio.

-

What do businesses actually gain from native audio models?

- Faster Response Times: By avoiding the step to convert speech into text and back, native-audio LLMs can provide faster, more fluid responses.

- Preservation of Emotional Context: By directly processing the audio, these models capture emotional nuances, delivering a more human-like interaction.

- Improved Accuracy: The model processes the original audio, reducing errors caused by transcription.

How does this change revenue, conversions, and support cost?

The advantages of native-audio LLMs for companies employing conversational AI in customer service, sales, or any other customer-facing role are evident:

- Better Customer Satisfaction: Faster, more accurate answers improve the user experience.

- More Engaging: Emotional nuance and prosody make conversations more interesting and relatable.

- Scalability: Businesses can handle more customer interactions without losing quality if they can respond faster and more accurately.

The pipeline architecture was never set up for conversation. It was designed for convenience, stitching together three already existing models. That shortcut is now a liability.

Gartner predicts that conversational AI will reduce customer service costs by an estimated $80 billion by 2026, with automation driving 1 in 10 customer interactions. But that ROI will vanish soon if your voice agent sounds like a robot while buffering.

You should note that the brands that are winning in voice AI are not winning in features. They are winning on feeling—the feeling comes from latency, prosody, and emotional coherence. The architecture level determines the success or failure of all three.

How to Pick the Right Tool for Your Business?

Before selecting a native-audio LLM, businesses should consider these things:

- Real-time Processing: Ensure the AI voice tool listens, understands, and responds to the customer immediately.

- Emotional Awareness: Always look for AI voice models that can interpret the intent or emotion behind the words. This ensures the assistant is not just aware of what was said but what was meant (a question, complaint, or request).

- Customization Options: Make sure your AI voice tool can be tailored to your business needs, whether that means changing the voice or incorporating industry-specific jargon.

Common Mistakes to Avoid

- Underestimating Latency: Every native-audio LLM operates at different speeds. Put them to the test in real-life situations.

- Ignoring Emotional Context: Not all native-audio models can capture the emotional depth of your message. Ensure your AI voice model delivers the emotional intelligence your business needs.

- Don't forget about integration: Make sure the solution works well with the systems and platforms you already have.

Real-World Example: How AssistifAI Revolutionized Customer Support

AssistifAI, an all-in-one AI system for conversations, workflows, and execution, uses native-audio LLM technology. It directly processes our raw audio effortlessly. AssistifAI can reduce support tickets by 43% within 30 days while improving the emotional accuracy of their AI interactions. 380+ businesses saw a visible difference in customer service after embedding AssistifAI.

Lag kills live calls.

If a voice assistant pauses or takes too long to respond, the call starts to fall apart. People become frustrated and may seek the help of your competitors.

AssistifAI is built to cut that delay. It is a voice-first, zero-code system designed for speed and accuracy. It can handle customer conversations, book appointments, run phone calls, trigger workflows, and capture conversation insights across the web, WhatsApp, and phone.

The Future of AI Voice Assistants with Native Audio LLMs

Native-audio LLMs are making a big impact in conversational AI. Modern AI voice models eliminate the need for the traditional STT→LLM→TTS pipeline. It’s time to shift attention to native-audio LLMs that enable faster, more emotionally mature interactions, improving both the user experience and overall engagement. The technology is here, and businesses should evolve with it.

Want to take your customer interactions to the next level? Explore how AssistifAI’s native-audio LLMs can help transform your business today.

Create a free assistant today.

FAQs

What is a native-audio LLM?

A native-audio LLM directly processes audio signals without needing to convert them into text and back. This leads to faster, more accurate, and emotionally intelligent interactions.

Why is emotional nuance important in voice assistants?

Understanding emotional nuance will make AI voice assistants sound more human. Tone, pauses, pitch, and pace tell you if someone is frustrated, confused, urgent, or ready to act. If a voice assistant ignores that, it gives the right answer in the wrong way.

How does latency impact customer experience?

Latency, or delay in response time, can negatively impact user experience. Native-audio LLMs solve the problem by processing speech in real time, enabling rapid responses.

Can native-audio LLMs be integrated into existing systems?

Yes, native-audio LLMs work well with existing platforms, improving both efficiency and the quality of customer interactions.

What is the problem with the traditional AI voice pipeline?

The traditional STT → LLM → TTS pipeline processes speech in three separate steps, introducing delays and breaking conversational flow. This results in higher latency, fewer natural responses, and limited emotional expression. It also increases the chances of errors at each stage, reducing overall accuracy.

Read More Blogs